So you have got calculations and string manipulation and data changes and integration of multiple sets of data, and in particular, high volumes of data from different type of sources. An ETL process runs properly if the process involves several things: extracting data from a source, maintaining the quality of the data, applying standard rules, and presenting data in various forms used in the decision-making process.

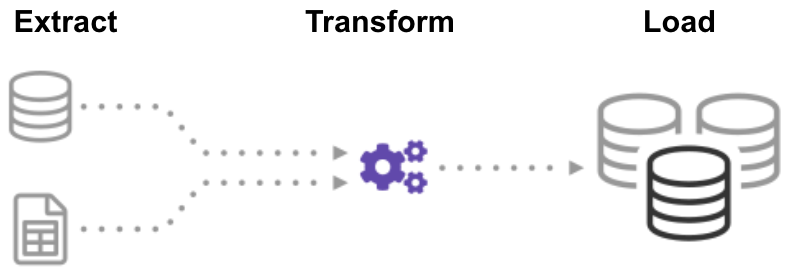

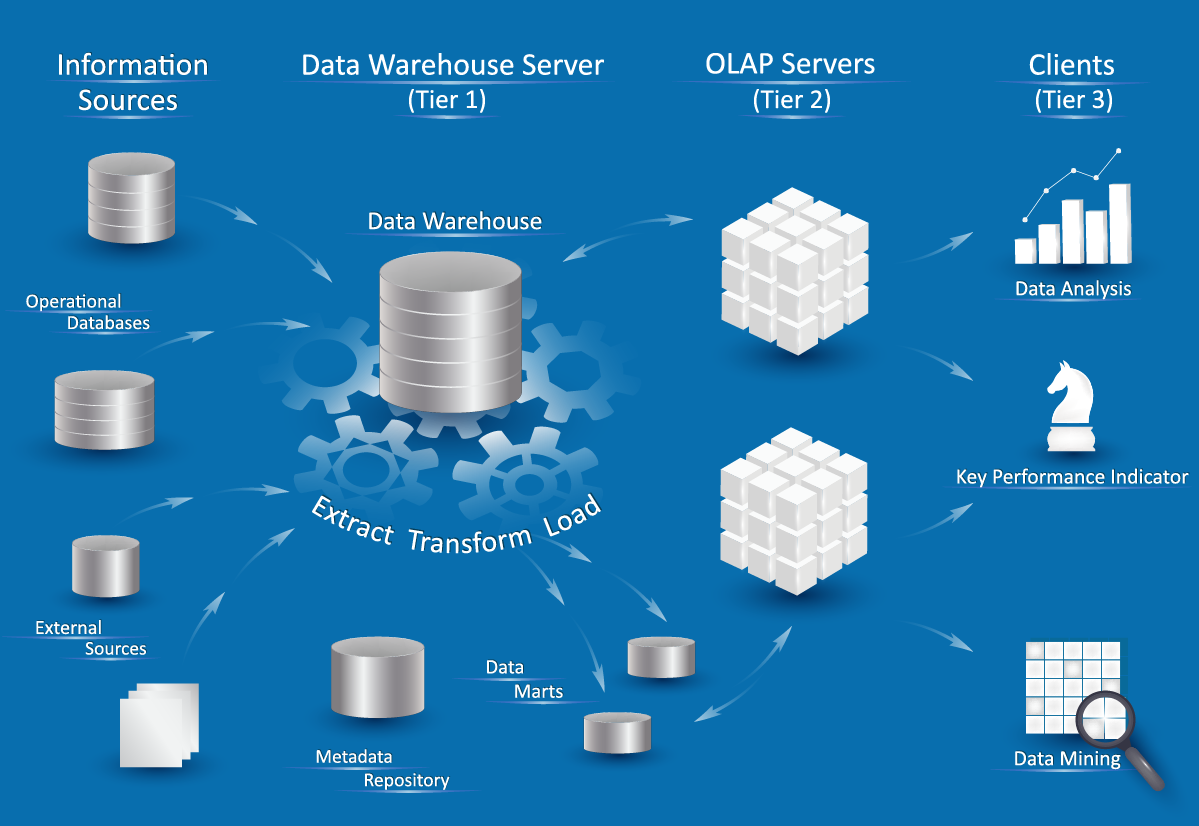

They are good for situations where you have complex rules and transformations. And that makes maintenance and traceability much easier than in a hand-coded environment.ĮTL tools are good for bulk data movement, getting large volumes of data, and transferring them in batch. The way the tools facilitate this is to connect their libraries and integrated metadata stores underneath them. So you can read multiple types of databases, files, web services, and bring all of these things together. Transform is the process of converting the extracted data from its previous form into the form it needs to be in so that. Extract is the process of reading data from a database. ETL tools gather data from multiple data sources and consolidate it into a single, centralized location. We are looking at somebody who understands data, not necessarily an application programmer, and the preference is, in particular, somebody who understands something about databases and SQL, since about 80% of the time, we are pulling data from databases directly. ETL is short for extract, transform, load, three database functions that are combined into one tool to pull data out of one database and place it into another database. ETL stands for Extract, Transform, and Load. At its most basic, the ETL process encompasses data extraction, transformation, and loading. Extract, Transform and Load (ETL) is a standard information management term used to describe a process for the movement and transformation of data.

The exact steps in that process might differ from one ETL tool to the next, but the end result is the same. So these aren't the kind of tools which are really aimed at application developers who are more into procedural coding and third-generation languages. ETL is the process by which data is extracted from data sources (that are not optimized for analytics), and moved to a central host (which is). And what these tools basically do is to pull out data from one or even multiple database(s) and place. And then, there can be dependencies in the schedule so that if one thing executes successfully, another thing can be triggered to run.ĮTL tools, themselves, are geared towards data oriented developers and DBAs. ETL is the abbreviation for Extract, Transform and Load. Or it's schedule-driven, and the schedule dictates that that at such and such a time, you'll run this particular extract. Zero-ETL, as currently constituted, glosses over a number of. So there is some kind of an event that triggers the extract. ETL is a three-phase process and includes extraction of the data from one or more sources, the transformation of that data so that it is clean, sanitized, scrubbed, etc., and then the loading of that data into a destination where it is analyzed and brings value to an organization. Most ETL tools are run in a batch mode because that's where they have evolved from. The way it works, in a sense, is that it takes these rules and runs them through an engine or generates code into an executable, which is then executable within your production environment.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed